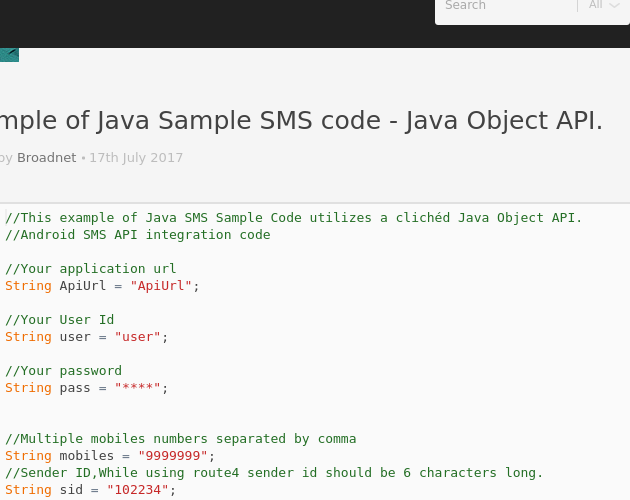

endpoints/base/:test operation (includes ':') in version 3.1. Here we explain show how to use a speech-to-text API with two Java examples. This table includes all the operations that you can perform on endpoints. See Deploy a model for examples of how to manage deployment endpoints. You must deploy a custom endpoint to use a Custom Speech model. PathĮndpoints are applicable for Custom Speech. This table includes all the operations that you can perform on datasets. See Upload training and testing datasets for examples of how to upload datasets. For example, you can compare the performance of a model trained with a specific dataset to the performance of a model trained with a different dataset. const SpeechRecognition window.SpeechRecognition window.webkitSpeechRecognition const SpeechGrammarList window.SpeechGrammarList window.webkitSpeechGrammarList const SpeechRecognitionEvent window.SpeechRecognitionEvent window. You can use datasets to train and test the performance of different models. You can register your webhooks where notifications are sent.ĭatasets are applicable for Custom Speech. Some operations support webhook notifications.We previously investigated text to speech so lets take a look at how browsers handle recognising and transcribing speech with the SpeechRecognition API. Are you trying to ask if you need to voice recorder without invoking the UI.

Set the appropriate model while calling the speech API (for english language video model works, while for french language commandandsearch). The Web Speech API has two functions, speech synthesis, otherwise known as text to speech, and speech recognition, or speech to text. The extracted file should be made available using either Storage cloud or send as ByteString. Use your own storage accounts for logs, transcription files, and other data. ffmpeg -i sample.webm -vn -acodec flac sample.flac.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed